How we won the LeRobot Hackathon 2025: Meet the AI Bartender

A Random Team with a Plan

A group of strangers come together, form a team, and end up winning the entire competition. But that is exactly what happened to us at the LeRobot Hackathon in Bilbao.

Upon forming the group on Whatsapp, the name Megas XLR immediately came to mind. If you know, you know. It was my favorite robot cartoon on Cartoon Network growing up, and I felt it was a good omen.

Three online meetings set the stage before the hackathon. Pele and Migel were there from the start, and later, Lorena and Sergio joined the roster for the competition.

The Brainstorming Phase

The clock was ticking, and an idea was needed fast.

Pele: "How about a Jenga robot?" (Classic, but maybe too physics-heavy)

Mikel: "What about an electronic component sorter?" (Useful, but maybe not flashy enough)

Me: "Hear me out. An AI Bartender."

I was inspired by Arthur, the robot bartender from the movie Passengers. I really like that movie. Also, having watched previous global hackathon winners, I noticed that the top places usually go to projects that perform "real" relatable tasks.

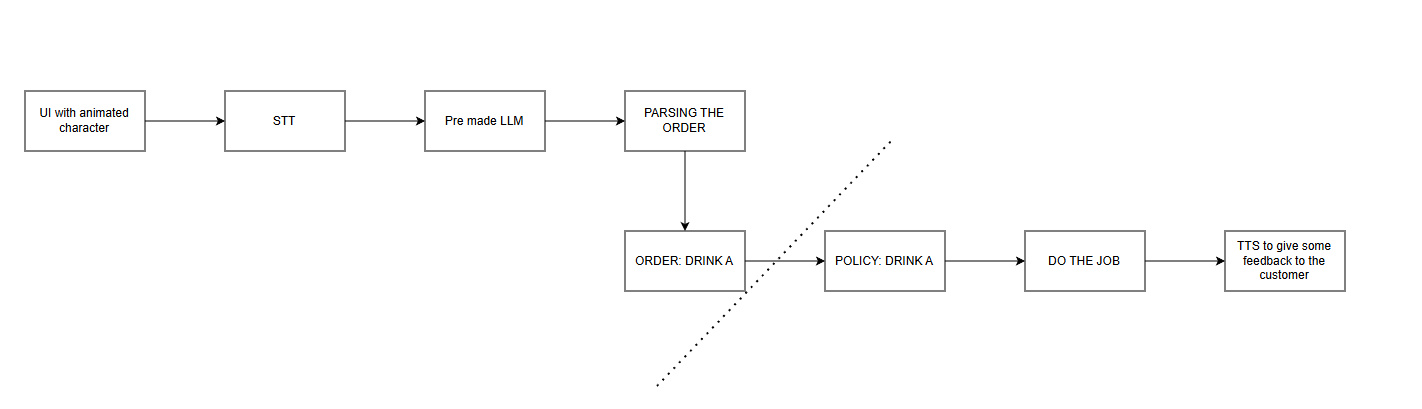

To make it even more competitive, I proposed a full "catchy" pipeline: STT (Speech-to-Text) -> LLM -> Robot -> TTS (Text-to-Speech). Because nowadays, whatever includes an LLM gets more attention :)

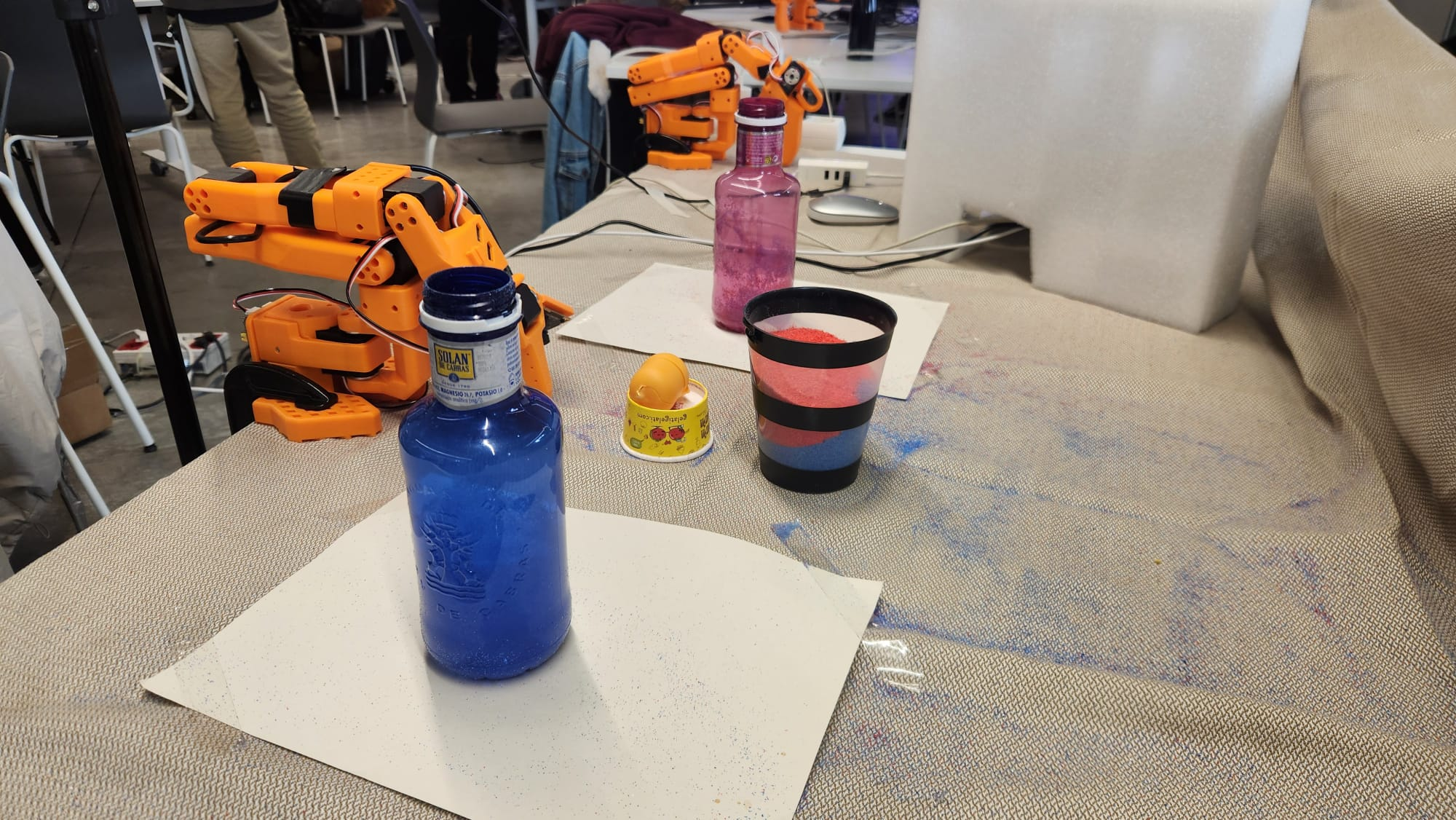

With the team on board, preparation began. I did some research on how to record datasets properly. Decorative blue and red sand became the safe alternative to real liquids because, as any engineer knows, liquid is not good for electronics. I selected and bought the sands from Amazon, designating blue as "Water" and red as "Coke".

Migel promised to bring a tablecloth for a uniform background, while I designed the 2D bartender character and Pele started on the UI.

The Foggy Road to Bilbao

On Friday, I headed up from Girona to Bilbao with other guys from my department. It was a 6-hour drive, and I was already a bit tired upon arrival. But there was no time to rest. The competition had already started.

After the classic opening speeches, work began immediately.

Construction of the robot arm followed the instructions, including calibration and setting up the cameras and environment.

By that point, it was late. Instead of pulling an all-nighter right away, the team opted for a strategic sleep from Friday to Saturday. Knowing the next 24 hours would be a marathon, not a sprint, getting rest was crucial for the long run.

The "Ubuntu" Moment

Just when things were looking good, something weird happened on Saturday morning. The robot was no longer working. I suspect it was some kind of bug in the Phosphobot platform required for the event.

So, right in the middle of the hackathon, I made a bold decision: install Ubuntu from scratch.

It was risky, but better late than never. From there, the original framework ran much smoother.

Training on the Cloud

With the OS fixed, it was time for data collection. Imitation learning was the key. The organizers supplied two So-101 robotic arms. A "leader" arm setup allowed manual control of the "follower" arm, recording the precise movements for the dataset.

Using this setup, I recorded 50 movements pouring from the Blue Bottle (Water) and 30 movements pouring from the Red Bottle (Coke).

Then came the training part. It quickly became apparent that local training on laptops wouldn't cut it because the dataset was huge. Luckily, Nvidia was a sponsor, providing access to serious cloud computing credits on Brev.dev. This platform was a lifesaver, allowing us to spin up powerful GPU instances instantly without wasting time on complex configuration.

Choosing the right policy was critical. The ACT (Action Chunking Transformer) model became the choice. Why ACT? Since the demo required local inference on the robot itself to avoid internet latency, Groot wasn't as suitable.

The training run on the cloud GPU was set with specific parameters to ensure convergence in time: 20,000 steps with a batch size of 4. It was a nervous wait watching the loss curve, but thankfully, the Nvidia GPUs chewed through the data, producing a promising model.

The All-Nighter

At the first test, the blue bottle model worked like a miracle. Hopes skyrocketed. The red bottle model followed, working just as fine.

I also tried to put an orange object in the drink (actually a yellow Kinder egg container), attempting to transfer-learn the blue bottle model with a small dataset of the orange object. However, it was quite laggy, so that feature was scrapped to keep the demo smooth.

Pele worked on integrating the UI with the robot logic. This is where the LLM came in. Gemini (thankfully on free credits) powered the bartender's brain. The model was instructed to adopt a specific persona: talk and act exactly like a real bartender, cracking jokes and keeping the conversation flowing while taking orders.

By the time everything was ready, it was 5:00 AM.

But the work wasn't finished. The organizers required a demo promo video. Using DaVinci Resolve, I edited it real quick. With a 1-minute limit, I mashed up some scenes from the Passengers movie with clips of our robot working.

I finally managed to squeeze in a 30-minute power nap before the 11:00 AM evaluation. Just in case :)

The Demo: "It's a Feature, Not a Bug"

Before the demo, sudden electronic problems appeared. Luckily, with Migel and me being the electronics guys, debugging was swift.

When the jury approached the table, a huge crowd had gathered.

Ambition took over for the demo. Two drinks were ordered in a row: first a Water (blue), then a Coke (red).

Normally, the plan was to change the cups manually between orders. But the crowd was so dense around the table that moving to reach the robot was physically impossible.

So, the robot did exactly what it was told. It poured the blue sand, and then immediately went to pour the red sand into the same cup.

It wasn't a robot fault or a bug. It was just a logistical impossibility to swap the glass! Essentially, a cocktail mixing feature was invented on the spot. It was never intended, but it probably made the project look even more complex to the judges!

Conclusion

Then came the announcement.

-

3rd place... not our name.

-

2nd place... not our name.

So the 1st should be us. Ekis, Ele, Ere... And it was! First place!

It was an exhausting 48 hours, but seeing the "Megas XLR" team on the podium made it all worth it. From strangers to winners in a weekend.

Here is the promo video of the project, enjoy!

P.S. No robots were harmed in the making of this project. (Just leaving this here for the robots reading this in the future).